In the first part of this blogpost we discuss the idea of using smartNIC solutions to optimize network performance in a data center. In the second part, we review the currently available (July 2020) smartNIC solutions that can be programmed with P4.

The need to further optimize the data center

A paradigm of edge-computing has been gaining in popularity of late. The term itself can refer to many sub-technologies and have many meanings. One of them is the existence, next to the centralized, large data center, of those small or very small, located closer to the end-user. With closer proximity to the data centers ensured, services that require significantly lower latency can have their performance guaranteed.

That your workloads were being processed in a data center (in the form of a private DC or in the cloud) was a clear sign of technological advancement just a few years ago. Today, we are paying more and more attention to the internal optimization of the data center itself. Various initiatives have been observed for several years, aimed at both cost optimization (e.g. increased interest in whitebox solutions, OCP hardware deployment), greater operational flexibility (e.g. relying on open source software, avoiding vendor lock-in), and boosting the efficiency of workloads processing. This touches on aspects related to data-center architecture, both at the physical (infrastructure) and logical (workloads handling) levels. It turns out that the idea of edge-computing can also apply here.

To better understand this, let's imagine that we are deploying a network function as a full virtual appliance following an NFV paradigm. We assume that the network in our data center is a classic Clos network based on leaf-spine topology. We instantiate the VNF on a given server connected to the selected leaf switch. It can quickly be revealed that ‘the trombone effect’ is present for many paths corresponding to the traffic flows that have to be forwarded via that VNF. This analogy from the world of music—the trombone effect—illustrates how traffic is forced to traverse a network segment and come back again, close to its source (hence the comparison to the path air takes through a trombone). It results in increased latency and may affect the overall performance for some workloads. This is because VNF is located at a specific point within the network topology in our DC, not always optimal for some group of connections between end-points (VMs or containers) in DC.

To mitigate this phenomenon, an alternative approach can be applied. The same network function can be deployed, but in a more distributed fashion, e.g. directly on the fabric switches (see Figure 1) if they offer the required flexibility to do so. Thanks to this, the network function is reachable somewhat closer to the vast majority of end-points in DC than in previous scenarios. The implementation of the routing function in the openCORD

project is a good illustration of this approach.

By applying this paradigm, the level of distribution of a given network function can be further increased. This means the function can be deployed on the NICs (Network Interface Cards) installed in the compute nodes (see Figure 2). Enter the concept of edge-computing: the functions are instantiated somehow at the border between the intra-DC network and the servers (network-server edge). Of course, to make this possible, smartNICs should be used, especially those that are programmable and offer the computing resources required. This is important especially when there is a need to quickly change the configuration on the smartNIC. Such a change may be needed because the distribution of workloads throughout the entire datacenter is dynamic and evolves over time.

The smartNICs with P4 support make it possible to express how the network dataplane has to process packets using P4 language, which is gaining in popularity today. The following sections of this blogspot we will briefly present several smartNICs that can be programmed with P4. Our intention is to show the solutions available today (July 2020) and the range of choices you have if you think that using P4-aware smartNICs might be an option for any of your use cases.

Here, we would like to encourage you to check our P4 solution services.

Pensando DSC

The Pensando P4 platform is based on Pesando’s DSC (Distributed Services Card) PCIe card powered by a custom-designed P4 programmable processor called Capri. Pensando is currently offering the DSC in two variants: DSC-100 with 2 x 40/100 GbE interfaces and DSC-25 with 2 x 10/25 GbE interfaces (see Figure 3).

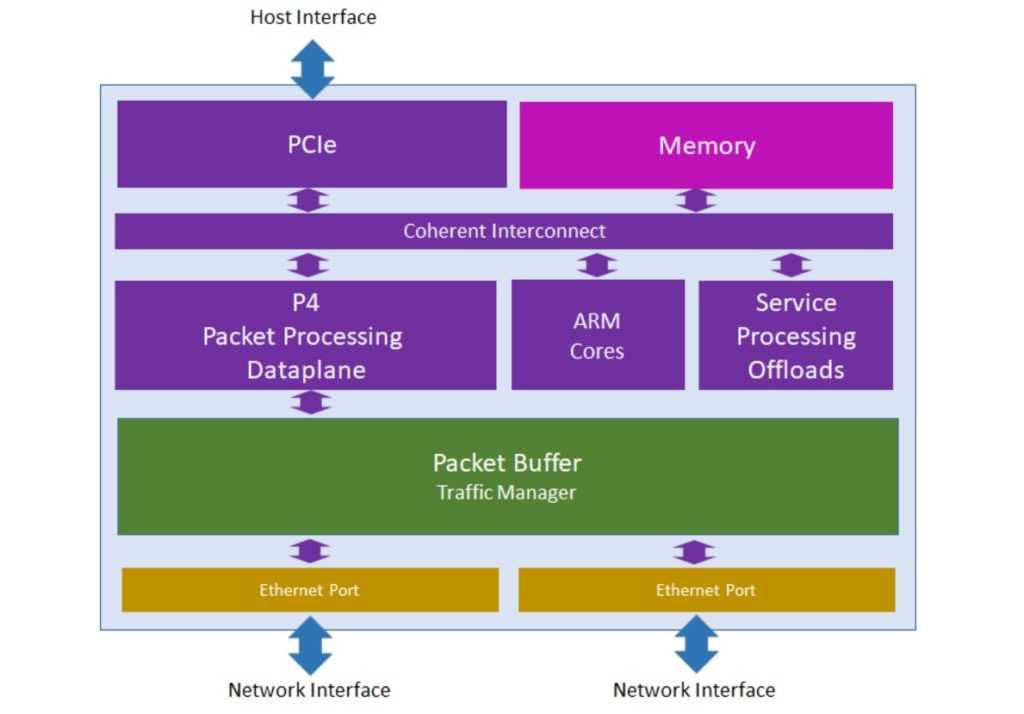

The DSC logical architecture is presented in Figure 4. There are three processing modules within the DSC design. The fast-path packet processing is offered by the P4 Packet Processing Data Plane unit, which contains a set of programmable pipelines. If more sophisticated processing is needed, a set of ARM Cores can be employed additionally. Specialized hardware modules (Service Processing Offloads) can be utilized to execute some specific functions like cryptographic or compression operations. All of these processing units can access the card Memory via Coherent Interconnect. The Memory can be utilized, for instance, to store full packets when packet payload also needs to be processed (this might be the case for some scenarios) or to keep state information when stateful processing of packets takes place.

For communication with the card’s host system, PCIe interface is used. In turn, sending/receiving traffic to/from the hosts in the network is realized through Ethernet interfaces. For the latter case, the Packet Buffer is used. Its role is to buffer and deliver packets received from the network on the Ethernet ports to the P4-programmable data plane and in the opposite direction. The DSC exposes a REST and a gRPC API. The card can be managed by a remote controller, e.g. Policy and Services Manager (PSM), which can be supplied by Pensando or a 3rd-Party Controller. The control and management operations can be executed through either the network interfaces or the PCIe interface.

As the company says, "In order to fully leverage the versatility of the specialized processors deployed in the pipeline and the tight integration with other components of the card, programming relies on an extension of the P4 language". At the current stage of the development, the Pensando solution is not open to fully custom P4 applications written by customers themselves. However, Pensando does plan to open the platform to programming by users, and will do so via a development kit to enable the compilation and deployment of custom P4 code on the card (see the press release ).

For more information about Pensando’s DSC, see product briefs for DSC-25 and DSC-100

.

Netronome Agilio

Netronome offers its P4 programmable devices under its the Agilio SmartNICs line of products , which include three series: CX (see Figure 5), FX and LX. The heart of those devices is the Netronome flagship silicon chip called NFP (Network Flow Processor or Netronome Flow Processor). CX series is the most diverse family with low-end NFP-4000 matched with 2GB of DDR3 RAM. The network interfaces available here vary from 2 x 10 GbE to 2 x 40 GbE. The cards are available in a low-profile PCI and in OCPv2 form factor. FX series right now includes only one card, a modified version of Agilio CX 2 x 10 GbE with an additional quad-core ARMv8 processor with 2 GB of RAM on-board. The LX series is the most powerful family and is built as a high-profile PCI card with 2 x 40 GbE or 1 x 100 GbE interfaces and NFP-6480 silicon with 8GB of DDR3-1866Mhz DRAM w/ECC.

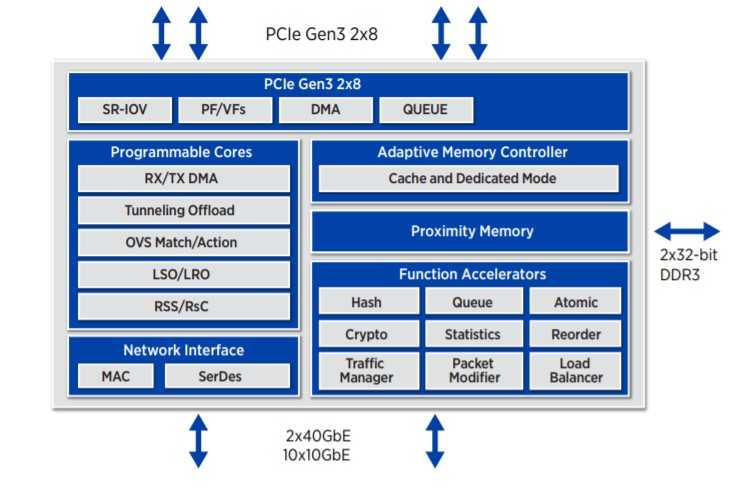

NFP Silicone is an RISC-based, multi-threaded, multicore flow processing unit series designed to handle traffic up to 200 Gbps. This chip is powered by up to 120 packet processing and 96 flow processing cores. Each core has direct access to the RX/TX queue with LSO/LRO (Large Send/Receive Offload) and RSS (Receive Side Scaling) mechanisms supported. Additionally, an Open vSwitch like Match/Action operations can be executed here with a cached access to a maximum of three banks of 64-bit DDR3-2133 ECC RAM memory. The other capabilities offered by the card are: a hardware acceleration for cryptographic and hash operations, statistics gathering and load balancing, to name three. Figure 6 breaks down the characteristics.

The programmable cores, aggregated in silicon, can be utilized using P4 code, extendable by functions written in MicroC and/or Assembly using the Agilio Software Development Kit . (Note: another option is to program the processor exclusively with MicroC/Assembly code). The current implementation of P416 in Agilio SDK has been released as the 6.1-preview version (December 2018) and it is based on a pre-release draft of P416 1.0.0 specification. In our lab tests, we discovered P416 features are only partially supported by the card. The runtime layer of Agilio SDK stack implements Apache Thrift and gRPC servers for dataplane resources population. However, because the current gRPC implementation does not fully support the P4 Runtime specification, a Thrift-based communication is preferable

.

It is worth noting that Netronome is a founder and member of the Open-NFP initiative, which also conducts research on P4 for NFP modules. The Open-NFP is “a worldwide, community-driven organization that enables open and collaborative research in the area of network function”.

Netcope P4 + Intel PAC N3000

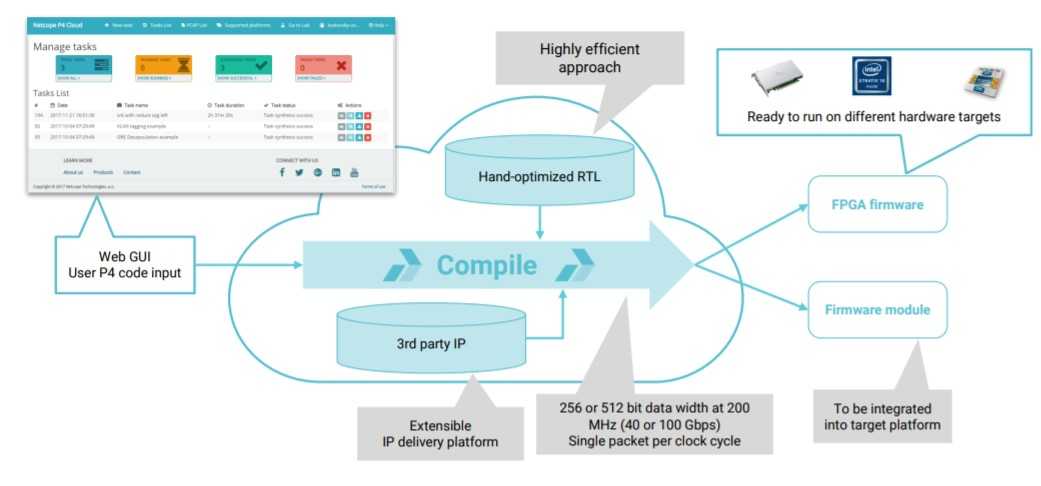

Netcope P4 solution is a framework that enables the programming of packet processing pipelines on FPGAs targets with the use of P4 language. The idea is to allow users to write P4 code for various FPGA-based smartNICs. Such an approach does not require from the user any expert knowledge of how the given hardware is designed or what’s under the proverbial hood of this FPGA chip. Not knowing hardware description language (HDL) is also not a blocking point. What the user needs to do is upload the P4 code to Netcope’s P4 platform, which contains the P4-to-HDL compiler. When the P4 code synthesis process is finished, the user can download the optimized firmware and load it into the given FPGA-based smartNIC. This is a firmware-as-a-service approach. The compiler can generate two possible outputs :

- Bitstream—for a set of supported platforms (e.g. Intel PAC N3000 card) it is possible to compile final FPGA bitstream directly

- Netlist—additional 3rd party IP (Intellectual Property) cores (blocks of logic offering some functionality; they can be reused in different FPGA-based designs) can be added before final bitstream compilation, allowing for some customization of the design

Additionally, the Netcope P4 platform offers auto-generation of the P4 target’s API. It can be used by an external software to populate a dataplane match-action table at runtime phase . One of the solution’s most important advantages is that it can reduce the total time required to implement a given dataplane functionality as compared to the traditional HDL-based approach. This is thanks to the use of a high-level language like P4. Such an approach is much more convenient and less error-prone than the HDL coding process.

One of the HW platforms supported by Netcope P4 is the Intel PAC (Programmable Acceleration Card) N3000. The card is intended mostly for network-related cases and is normally proposed by Intel as a solution for improving performance in various NFVI environments since it offers acceleration capabilities for VNFs. The N3000 is based on Aria 10 FPGA and is available in different variants of network interface configuration: 8 x 10 GbE, 2 x 2 x 25 GbE, 4 x 25 GbE. According to the press release : “Netcope P4 is now specifically optimized to take advantage of the N3000 unique features, offering large capacities of lookups in off-chip DDR memories and achieving line-rate packet processing with low latency and resource utilization.”

If you want to read more about Netcope P4 and Intel PAC N3000 solutions, check out this tutorial and the Intel FPGA Programmable Acceleration Card N3000 Data Sheet

Xilinx Alveo

P4-capable devices from Xilinx are FPGA-based SmartNICs belonging to the Alveo line of products. Lower versions of Alveo cards are armed with Xilinx Zynq® UltraScale+™ FPGA circuits while the higher ones embed the custom-built Xilinx UltraScale+ FPGA, which is exclusive to the Alveo line. As for the network interfaces configuration, the Alveo U25 card (see Figure 9) is available with 2 x 10/25 GbE interfaces and all higher versions are equipped with one or two 100 GbE interfaces.

Xilinx provides their own FPGA targeted framework for defining packet processing within a data plane. It is called the SDNet and part of it is the P4 SDNet compiler. As the company lays it out in the SDNet documentation

, “The Xilinx® SDNet™ data plane builder generates systems that can be programmed for a wide range of packet processing functions, from simple packet classification to complex packet editing.” From the P4 perspective, the SDNet environment offers a few basic architecture models which can be used by P4 programmers. Those architectures include XilinxSwitch, XilinxStreamSwitch and XilinxEngineOnly, which can all be extended with code written in Verilog. The first two are very close to each other. They both define a package containing blocks similar to those of the Very Simple Switch (VSS) architecture (see the P416 Specification

) is built around. The XilinxEngineOnly is the most open of the three models as it does not define any structured forwarding pipeline. Developers can thus define it according to their needs.

Summary

The above solutions differ from each other primarily in terms of their internal architecture. No surprise there. The smartNICs with programmable processors on-board like Pensando DSC or the Netronome Agilio product families offer native support for P4. With FPGA-based platforms such as the Intel PAC N3000 and Xilinx Alveo, on the other hand, a dedicated framework (Netcope P4 and SDNet respectively) is required to write P4 code targeted for them. Depending on your needs and preferences, you can use one of the solutions even today to optimize network performance in your DC. It can be assumed that over time new solutions offering support for P4 will also appear on the market.