In infrastructure environments, “we implemented MCP” is not a security architecture.

MCP is a genuinely useful interoperability layer. It standardises how AI systems discover and invoke tools, lowering integration costs and reducing custom glue code. But interoperability is not the same as operational safety. In environments where tools can operate routers, switches, firewalls, and other control planes, that gap is large enough to cause real incidents.

This article examines six security gaps that MCP does not solve by itself:

- Missing per-tool and per-action authorization standards

- Confused deputy risk in LLM tool use

- Unsafe credential patterns on infrastructure devices

- Weak device-side enforcement over SSH and CLI

- Fragmented audit trails across agent, server, and device logs

- Overly broad tool discovery exposing attack surface

MCP gives you a standard way to integrate tools. You still need to build authorization, credential isolation, downstream enforcement, and auditability around it. That is the actual security work.

Why MCP security is different for network infrastructure

Model Context Protocol (MCP) is one of the most useful ideas to emerge in AI tooling in a long time. It gives AI applications a common way to discover and invoke tools: to read files, query systems, fetch context, and execute actions. That standardization matters because it lowers integration costs, reduces custom glue code, and makes it easier to move from a chatbot with plugins toward an operational agent.

That is the upside. The challenge is that most MCP examples, tutorials, and demo servers are built around software tools with a relatively contained blast radius: local files, test databases, issue trackers, and developer APIs. In that world, a bad tool call usually means a failed request, a malformed response, or an embarrassing bug.

Infrastructure devices are different.

When an MCP tool calls a REST API and gets a 403, the worst case may be a harmless error. When an MCP tool connects to a core router over SSH and enters configuration mode, the worst case is an outage.

That does not make MCP bad. It means protocol interoperability is not the same thing as operational safety, and this post focuses on the gap between those two. But if your tools touch routers, switches, firewalls, load balancers, or other infrastructure control planes, MCP compliance alone is not enough. The required authorization, credential, enforcement, and audit controls still have to be built around the protocol.

The core MCP security gap most teams miss

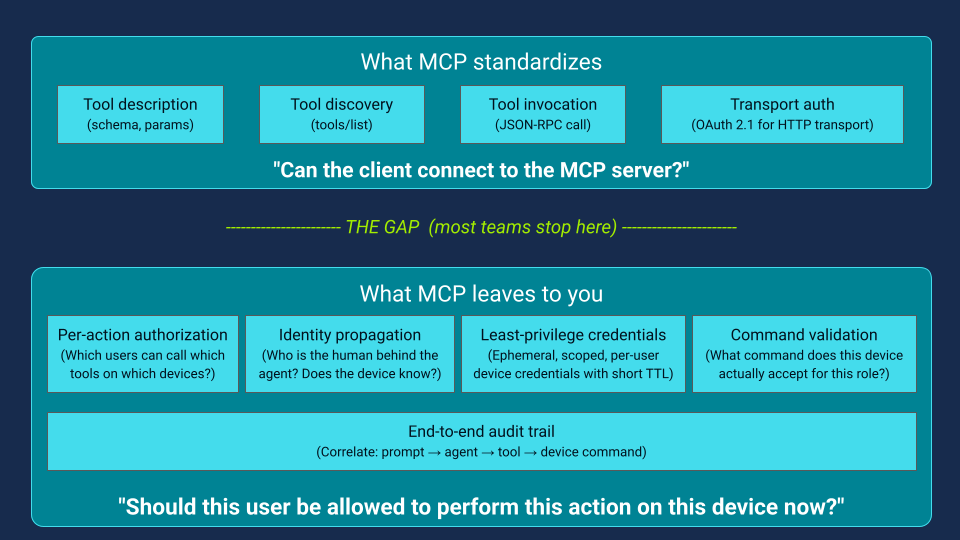

Every MCP deployment raises two separate security questions:

- Can the client connect to the MCP server?

- Should this user be allowed to perform this action on this device right now?

MCP helps with the first question (Figure 1), especially for protected remote servers using standard web auth patterns. Its authorization model applies at the transport layer for HTTP-based transports. It is not a universal policy engine for downstream actions.

In low-risk developer tooling, that gap is often manageable. In infrastructure operations, it becomes critical because the downstream action may be an SSH command on a production device. If you collapse these into one rule—“the agent is connected, so it can act”—you’ve already broken the security model.

What MCP standardizes vs. What it leaves to you

MCP standardizes how tools are described, discovered, and invoked (Figure 1). That’s a huge win. It also now includes stronger guidance and standards-based authorization patterns for protected remote server scenarios.

But MCP does not (and realistically cannot) standardize your organization’s operational policy for things like:

- which users can run which tools

- which tools can target which devices/environments

- which commands are allowed for which roles

- how user identity is propagated to downstream systems

- how to correlate a prompt, an agent decision, a tool invocation, and a device command log entry

Those are integration and control-plane problems, not just protocol problems. The risk comes when teams assume protocol-level standardization implies these policy questions are solved automatically. They aren’t.

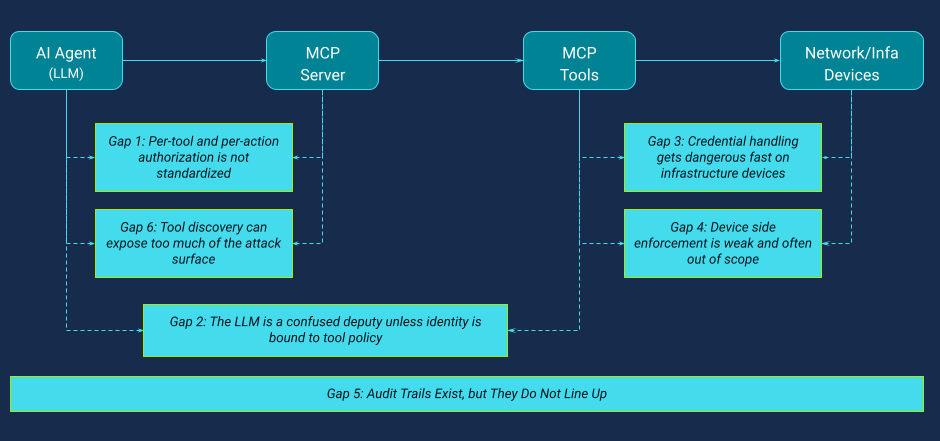

Six MCP security gaps that matter for infrastructure operations

Figure 2 summarizes the major security gaps discussed in this article. Each gap is manageable on its own, but in practice, they often combine into a failure chain that is hard to prevent and even harder to investigate.

Gap 1: MCP has no per-tool authorization standard

Many teams hear “MCP supports authorization” and assume “MCP handles access control.” That is too optimistic. As noted in the core distinction above, MCP primarily addresses whether a client can talk to an MCP server; it does not standardize a portable policy model for deciding whether a specific user may perform a specific action on a specific target at a specific time. That gap is easy to miss in demos and quickstarts, but risky in production.

from mcp.server.fastmcp import FastMCP

mcp = FastMCP("my-network-tools")

@mcp.tool()

def run_command_on_device(device: str, command: str) -> str:

"""Run a command on a device."""

return ssh_to_device(device, command)

In a sandboxed developer workflow, the above code may be acceptable. In infrastructure operations, it is not. The tool may have direct access to production systems, but no built-in understanding of user role, device criticality, command risk, environment scope (lab/staging/prod), or approval and change-window requirements. If you do not add those checks yourself, the effective policy becomes: if the agent can invoke the tool, the action is allowed. That is not authorization. It is reachability.

The issue is not a flaw in MCP itself, but the gap between what teams often assume it provides and what it actually standardizes, and in infrastructure operations, that gap is large enough to matter.

Gap 2: Why LLMs are confused deputies without identity-bound tool policy

The confused deputy problem is not new, but MCP makes it easier to trigger in real operational workflows. In an agentic workflow, the model interprets user instructions and decides when to call tools. If it has access to a powerful tool and there is no strong policy check at invocation time, it can act beyond the user’s intended or permitted authority.

Consider a simple example. A read-only operator says, “There is a loop on spine 01. Shut down eth1 now.” The model sees urgency and a plausible fix. If the agent can use a write-capable tool, and the server does not enforce role and action level authorization, the model may call the tool. It does not do this because it is malicious. It does it because it is doing what it was designed to do: follow instructions and complete the task. That is exactly why the model is not an authorization engine and cannot reliably enforce organizational permission boundaries, change windows, approval requirements, or environment restrictions unless those controls are enforced outside the model.

The risk becomes much worse under prompt injection. Untrusted text—support tickets, logs, docs, comments, chat transcripts—can influence the model’s decisions. If a privileged agent consumes attacker-controlled content and your tool policy is weak, the model becomes a very efficient confused deputy.

A widely discussed 2025 Supabase Cursor MCP scenario illustrates the pattern: an agent with elevated database privileges ingests attacker-controlled support-ticket text and can be induced to perform unintended SQL actions, including exfiltrating sensitive data through the same workflow. The agent executed the injected instructions because it operated with broad privileges and lacked the policy context needed to reject them.

MCP’s security guidance does discuss confused deputy risks and prompt-injection-related controls in specific contexts (for example, proxy consent flows). But MCP does not provide a general mechanism that binds a user’s identity, role, and runtime context to each individual tool invocation in a portable way.

So the security boundary cannot be “what the user asked” or “what the model decided.” It has to be a deterministic policy check on every call: user identity, target, action, parameters, and context. If your main guardrail is “the model usually won’t do that,” you do not have a guardrail. You have a hope-based control.

Gap 3: Shared credentials and the MCP privilege concentrator problem

Most MCP security discussions focus on client-server authentication, token flows, and prompt injection. Those are important, and the MCP security materials emphasize them for implementers. But in infrastructure operations, one of the most dangerous failure modes is often much simpler: what credentials the tool uses when it touches the device. MCP does not standardize how device credentials (SSH keys, API tokens, certificates, passwords) should be managed, scoped, rotated, or tied to individual users.

That leaves teams to solve credential handling themselves, and in practice, many start with whatever makes the prototype work quickly: a shared SSH key mounted into the MCP server container, a long-lived admin credential in an environment variable, a broad API token reused across users and sessions, or a single automation account with access to everything. These patterns make demos easy, but in production, they can quietly turn the MCP server into a concentration point for privileged access.

The first problem is the loss of identity and least privilege at the device boundary. Device logs often show only a generic automation identity, such as an mcp-agent account, rather than the human who initiated the action. At the same time, the tool runs with the permissions of the shared credential, not the permissions of the requesting user, so role boundaries that exist upstream can disappear at the point of execution.

The second problem is persistence and brittle operations. Long-lived shared credentials mean that a compromise of the MCP server can become durable access to infrastructure devices, rather than a short-lived incident. Because those credentials are often reused across many devices and agent instances, rotation and revocation become painful enough that teams delay them, which extends exposure even further.

This is especially acute in SSH-based network operations, where shared automation identities are still common and fine-grained IAM is less mature than in many cloud native environments. The result is that an MCP server can quietly become a privilege concentrator, a single service holding broad and persistent access to the control plane unless credential design is treated as a primary security control.

Gap 4: Why SSH/CLI enforcement falls short for MCP servers and AI agents

MCP’s security boundary largely ends at the MCP server. Once a tool call is permitted, what happens between that tool and the target device is mostly outside the protocol — and in infrastructure operations, that is often where the highest risk lives.

When a tool opens an SSH session to a device, the device typically sees only an authenticated login and a stream of commands. It usually does not see the context needed for safe authorization decisions: who the originating user was, whether the action was explicitly approved, whether it came from an autonomous planning step, whether untrusted input influenced the model, or which upstream policy checks allowed the call.

This creates a dangerous asymmetry. Upstream systems (the AI app, MCP server, and identity/policy layers) may have rich context but weak enforcement unless teams build it deliberately. The downstream device has execution authority, but in SSH/CLI workflows, it usually sees only a coarse identity and limited context.

That is why this gap is so serious for network devices. If the SSH session is valid and privileged, the device will execute the command without seeing the upstream user or decision context. From the device’s perspective, it is simply a valid session issuing valid commands.

Some modern interfaces support finer-grained controls (for example, gNMI path-based policies or NETCONF NACM), and cloud and database APIs often evaluate identity, action, and resource context at request time through IAM or row-level security. But when the operational path is SSH and CLI, which is still the reality in many environments, authorization usually collapses to a much coarser model: connected with a privilege level, or not.

So when teams say, “we’ll rely on the device to enforce policy,” what they often mean is: they authenticated to the device with a powerful account and are trusting the agent and surrounding controls to behave correctly.

That is not defense in depth.

Gap 5: Fragmented audit trails across AI agent, MCP server, and device logs

This gap is easy to underestimate until the first incident. When an AI agent performs a multi-step operation, the evidence usually ends up fragmented across multiple systems: the user conversation or application log, the agent runtime log, the MCP server tool invocation log, identity provider logs, SSH, TACACS+, or RADIUS logs, device command accounting or syslog, and sometimes ticketing or incident context. Each system captures part of the truth, but none captures the full chain of events.

MCP does not provide a standardized end-to-end audit model for this workflow. In practice, that means there is no portable correlation mechanism that reliably links a user request to every downstream tool call and then to the final device command. Even if MCP level calls include request identifiers, that context does not automatically survive the hop into SSH sessions, TACACS command accounting, or device logs.

Correlating what happened requires manual matching of timestamps, usernames, hostnames, and session details across systems that were not designed to share a common trace context. That becomes a serious problem during incident response. After a bad or destructive change, teams may have to reconstruct basic facts by hand: who initiated the action, what prompt or surrounding context led to it, which tool invocation triggered the write operation, what policy checks were evaluated, which command actually ran on which device, and whether the action was approved or autonomous.

If your logs are not correlated by design, incident response becomes archaeology. In regulated or high assurance environments, that is not just inconvenient. It is a governance and accountability gap.

Gap 6: Flat MCP tool discovery as an attack surface accelerator

MCP tool discovery is a core feature, not a flaw. The model needs to know what tools exist in order to decide which ones to call. But in infrastructure environments, capability discovery can become an attack accelerator if the server exposes too much detail and does not filter what is visible by identity or role. A connected client may quickly learn not just which tools exist, but also which actions are possible, what parameters they accept, and how to invoke them correctly.

This risk usually comes from two patterns working together: flat discovery (everyone sees the same capabilities) and overly generic tool interfaces.

In poorly designed deployments, discovery can effectively reveal the operational attack surface: read and write tools, device naming conventions, target selection patterns, broad command parameters such as device and command strings, and even workflow-specific actions such as rollback or link shutdown operations. That gives a compromised client or manipulated agent a practical map of what is possible and how to attempt it.

This risk becomes much worse when discovery is combined with weak authorization and overly generic tool design. A tool such as run_command_on_device(device: str, command: str) exposes a very broad execution surface while pushing policy and safety decisions into prompt interpretation or ad hoc checks. In practice, that means the model learns both the capability and the invocation format for high-impact actions.

The issue is not tool discovery itself. The issue is flat discovery, where every connected client sees the same capability set, and generic tools, where one interface can express too many actions without strong server-side validation.

Safer patterns are narrower and easier to govern because they encode intent, scope, and validation points directly in the interface. For example, tools such as:

show_bgp_summary(device),

get_interface_status(device, interface),

request_interface_shutdown(device, interface, ticket_id, reason),

apply_intent(intent_type, target, change_id)

Create clearer enforcement points than a generic command runner.

In other words, discovery should reveal only the capabilities a given user is allowed to use, and the tools themselves should be designed to make unsafe or unauthorized actions hard to express.

How MCP security gaps combine into a failure chain

The gaps above do not stay separate for long. In a real deployment, they combine into a failure chain. A user interacts with an AI assistant that has access to MCP tools for network operations. The MCP server may authenticate the client, but still lacks per-tool and per-target authorization.

The agent sees a broad capability set, including write-capable operations, because discovery is not tightly filtered.

A bad instruction or untrusted content influences the model into choosing a dangerous tool call.

The tool executes using a shared high privilege device credential, the device accepts the command, and the action succeeds.

Logs may exist in several places, but user intent, agent decision making, policy checks, and device execution are not cleanly correlated.

Each step is plausible, and together they produce an outcome that is difficult to prevent and difficult to investigate.

It is also important to be precise about scope. Some links in this chain come from protocol scope limits, some come from implementation choices, and some come from legacy device management realities. But the operational result can still be the same: a deployment that is technically spec-compliant yet operationally unsafe.

That is why “we implemented MCP” is not a meaningful security statement for infrastructure automation.

How to secure MCP for infrastructure operations: Five controls to build around the protocol

If you want to use MCP safely for infrastructure operations, the control point is not the protocol alone. The control point is the security architecture around it.

Identity-aware tool authorization

Tool use should be evaluated server-side against the requesting user identity, role or group, target device or environment, requested action and parameters, and runtime context such as approval state, incident mode, or change window. The model should not be the authorization engine.

Just-in-time and least privilege device credentials

Wherever possible, use short-lived and scoped credentials such as ephemeral SSH certificates, per-user or per-session credentials, and time-bounded access with automatic expiry or revocation. The goal is to prevent the MCP server from becoming a concentrator of standing privilege.

Device-side enforcement

Even if your upstream policy checks are strong, you should still enforce controls at the execution layer through mechanisms such as command authorization and accounting, role-based shell restrictions, restricted shells or jump hosts, model-driven API authorization policies, target allowlists, and command validation. Assume upstream controls will fail eventually.

End-to-end audit correlation by design.

You should be able to trace a single operation across the user request, agent decision step, MCP tool invocation, credential issuance event, session creation, and device command execution. If those records cannot be linked quickly and reliably, incident response becomes guesswork.

Policy as code

Tool permissions, target scoping, command restrictions, approval requirements, and break-glass exceptions should be machine-readable, testable, version-controlled, and reviewed like application code. If the policy cannot be tested, it will drift.

Conclusions: MCP compliance is not the same as infrastructure security

This is not a takedown of MCP. MCP is useful, and standardized tool integration is genuinely valuable. A common protocol is an important foundation for building secure systems at scale, but a protocol is not a control framework. In infrastructure operations, interoperability does not replace authorization, least privilege, downstream enforcement, or auditability.

That is the actual security work.

Next in the Series

In the next post in this series, we walk through a defense-in-depth architecture that addresses each of these gaps — using an identity provider, a secrets engine with just-in-time SSH certificates, per-tool scope enforcement, device-side command validation, and policy-as-code — tested against real network devices in a reproducible lab environment.

Continue reading: MCP server security in practice

Part 1 mapped the gaps. Part 2 closes them. Tomasz moves from problem to implementation — walking through JWT authentication at the MCP server boundary, per-tool scope enforcement with FastMCP decorators, and discovery-time filtering that ensures agents only see the tools they're authorised to use.

Read it here:

MCP server security: JWT authentication, scope-based authorization, and tool-level access control for network automation

References

- MCP Security Best Practices — Model Context Protocol Specification

- MCP Authorization — Model Context Protocol Specification

- The MCP Authorization Spec Is... a Mess for Enterprise — Christian Posta

- The Security Risks of Model Context Protocol — Pillar Security

- A Timeline of MCP Security Breaches — AuthZed

- MCP Security Vulnerabilities — Practical DevSecOps

- Is That Allowed? Authentication and Authorization in MCP — Stack Overflow Blog

- Security Risks of Agentic AI: An MCP Introduction — Bitdefender

- MCP Critical Vulnerabilities — Strobes

- Model Context Protocol: Understanding Security Risks and Controls — Red Hat