At high packet rates, the overhead of the Linux kernel's networking stack becomes a real constraint; context switches, system calls, and general-purpose abstractions all add up. Kernel bypass frameworks like DPDK exist precisely to solve this.

DPDK (Data Plane Development Kit) is a set of libraries for implementing user-space drivers for NICs (Network Interface Controllers). It provides a set of abstractions that allows a sophisticated packet processing pipeline to be programmed. But how does DPDK work? How is it able to access the hardware directly? How does it communicate with the hardware? Why does it require a UIO module (Userspace input-output)? What are hugepages, and why are they so crucial?

In this blog post, I will try to explain, with a reasonable amount of detail, how a standard kernel space NIC driver works, how a user space program can access hardware, and what performance gains can be achieved through kernel bypass from having it do so.

Linux kernel networking software stack: Processing and performance limits

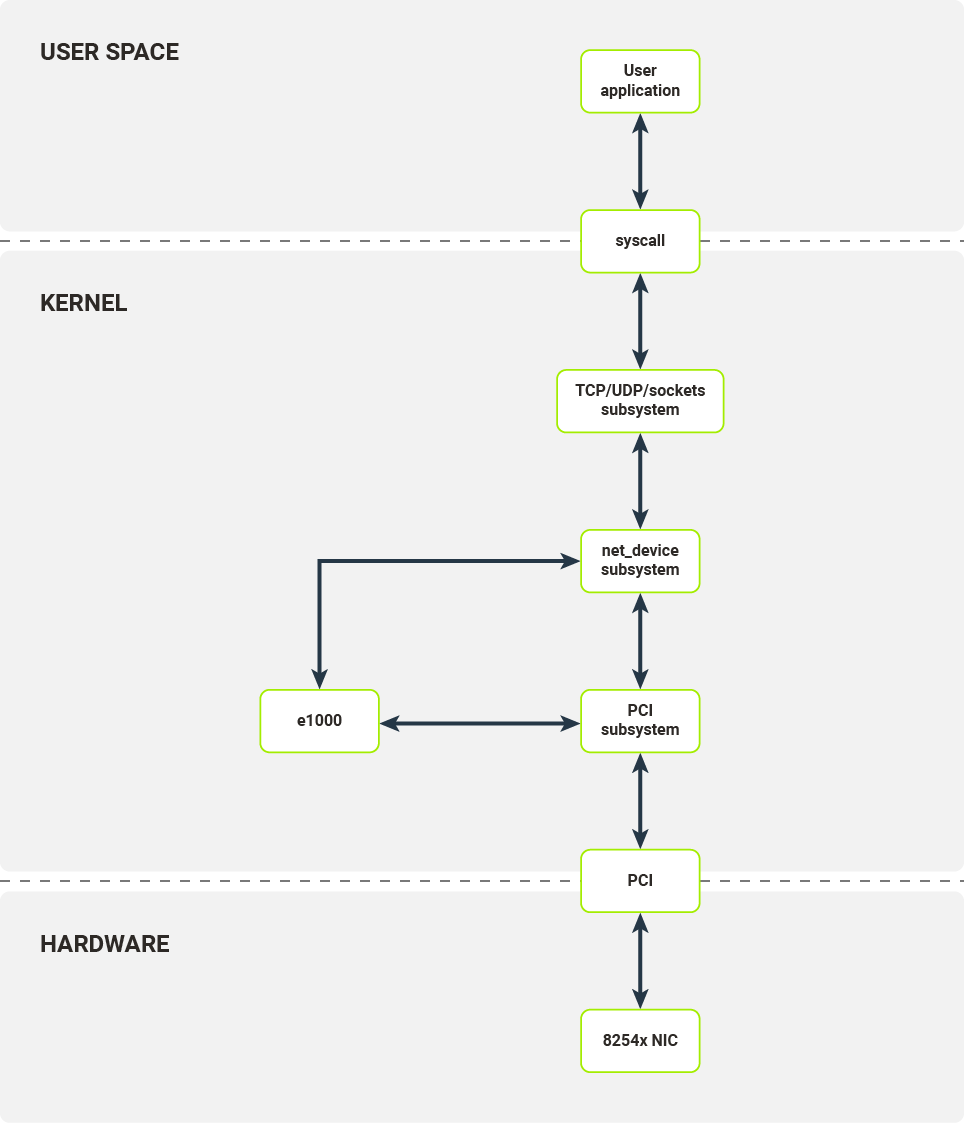

Before diving into the low-level details, let’s examine how a standard networking software stack on Linux works, and where its performance limitations emerge under high packet load.

Any user application that seeks to establish a TCP connection or send a UDP packet has to use the sockets API, exposed by libc. In the case of TCP, internally, several syscalls are used:

- socket() - allocates a socket and a file descriptor;

- bind() - binds a socket to an IP address and/or protocol port pair (application specifies both or lets the kernel choose the port);

- listen() - sets up connection queue (only for TCP);

- accept4() - accepts an established connection;

- recvfrom() - receives bytes waiting on the socket (if there are any, in the case of empty read buffer block);

- sendmsg() - sends a buffer over the socket.

Call to each of these kernel-side functions invokes a context switch, which eats up precious compute resources, adding measurable latency to the packet processing path. During a context switch, the following steps are executed:

- Userspace software calls kernel-side function;

- The CPU state (user application) is stored in memory;

- CPU privilege mode is changed from underprivileged to privileged (from ring 3 to ring 0); privileged mode allows for more control over the host system, e.g., access IO ports, configure page tables;

- The CPU state (kernel) is recovered from memory;

- Kernel code (for a given code) is invoked.

Let’s assume that our application wants to send some data. Before the application’s data goes onto the wire, it is passed through several layers of kernel software.

Kernel socket API prepares an sk_buff structure, which is a container for packet data and metadata. Depending on the underlying protocols (network layer - IPv4/IPv6 and transport layer - TCP/UDP), the socket API pushes the sk_buff structure to the underlying protocol implementations of the send operation. These implementations add lower-layer data such as port numbers and/or network addresses.

After passing through the higher-level protocol layers, prepared sk_buffs are then passed to the netdev subsystem. The network device, along with the corresponding netdev structure responsible for sending this packet, are chosen based on IP routing rules in the Linux kernel. The netdev structure’s TX callbacks are then called.

The netdev interface enables any module to register itself as a layer 2 network device in the Linux kernel. Each module has to provide the ability to configure the device’s offload, inspect statistics, and transmit packets. Packet reception is handled through a separate interface. When ready for transmission by the NIC, the prepared sk_buff is passed to the netdev’s TX callback, which is a function of the kernel module.

The last step moves us into concrete device driver territory. In our example, that device is the e1000 driver for the family of 8254x NICs.

After receiving a TX request from the upper driver layers, the e1000 driver configures the packet buffer, signals to the device that there is a new packet to be sent, and notifies the upper layer once the packet has been transmitted (note that that is not the equivalent of successful reception on the other endpoint).

Today, the most commonly used interface for communication between kernel drivers and network devices is a PCI bus, e.g., PCI Express link-icon .

To communicate with a PCI device, the e1000 kernel module has to register itself as a PCI driver in Linux’s PCI subsystem. The PCI subsystem handles all the plumbing required to correctly configure PCI devices: bus enumeration, memory mapping, and interrupt configuration. The e1000 kernel module registers itself as a driver for a specific device (identified by vendor ID and device ID). When an appropriate device is connected to the system, the e1000 module is called to initialize the device - that is, to configure the device itself and register the new netdev.

The next two sections explain how the NIC and kernel module communicate over the PCI bus.

NIC-to-Kernel Communication: PCI Bus Interfaces, MMIO, and DMA

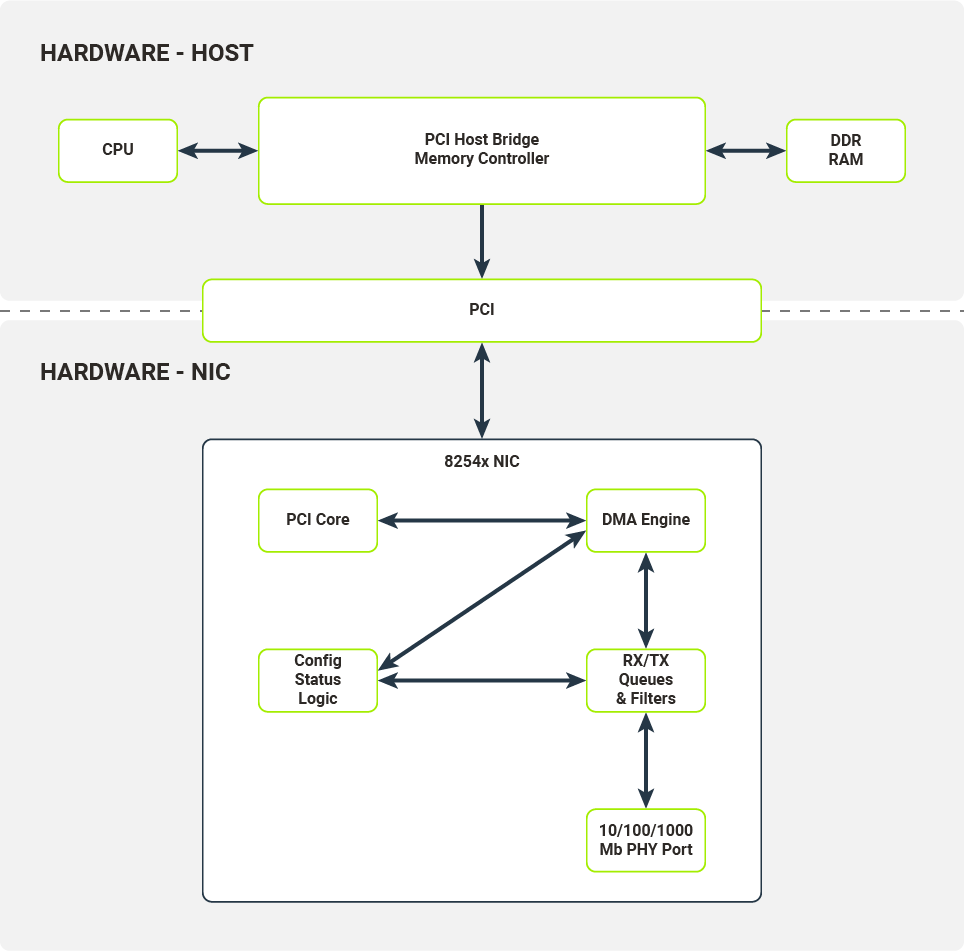

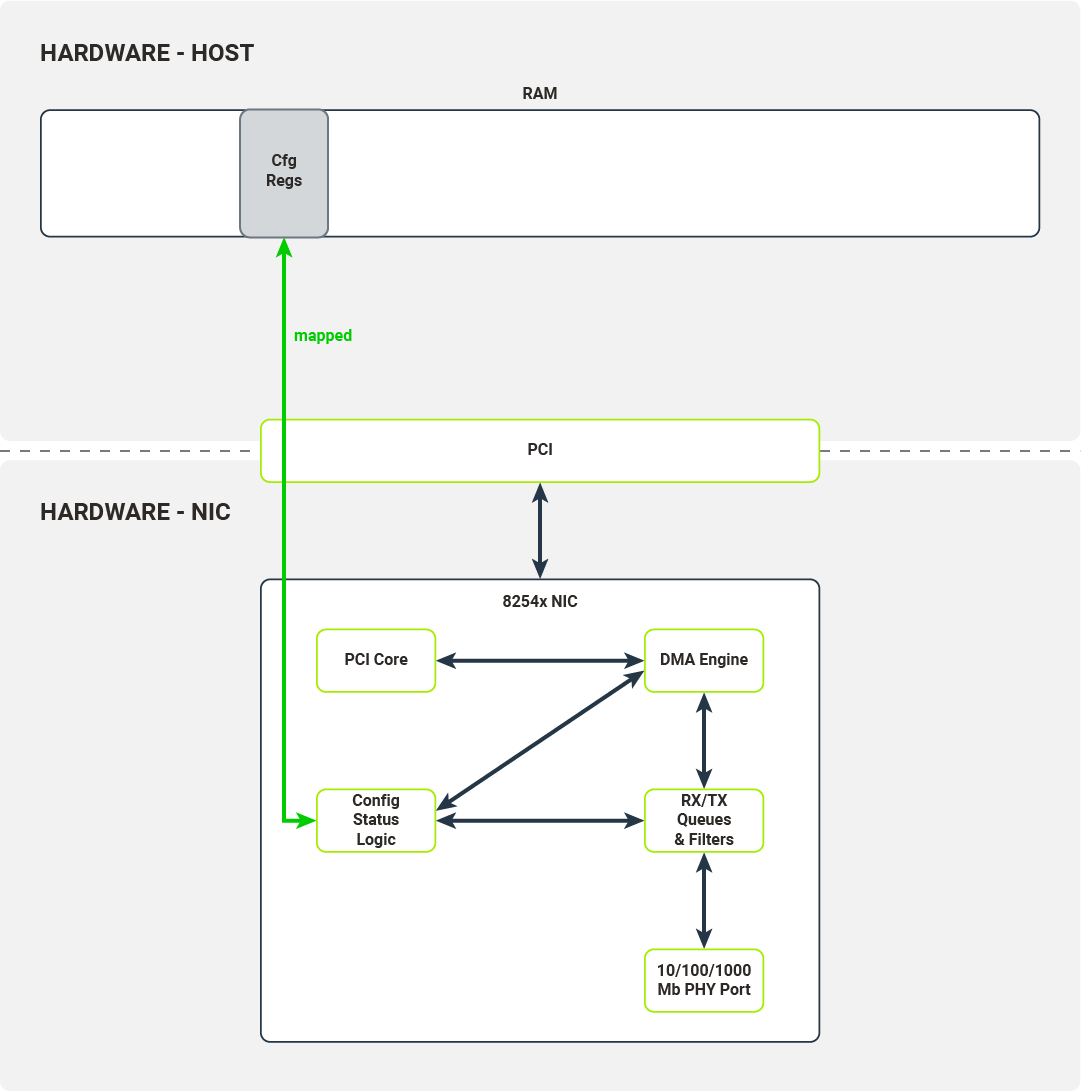

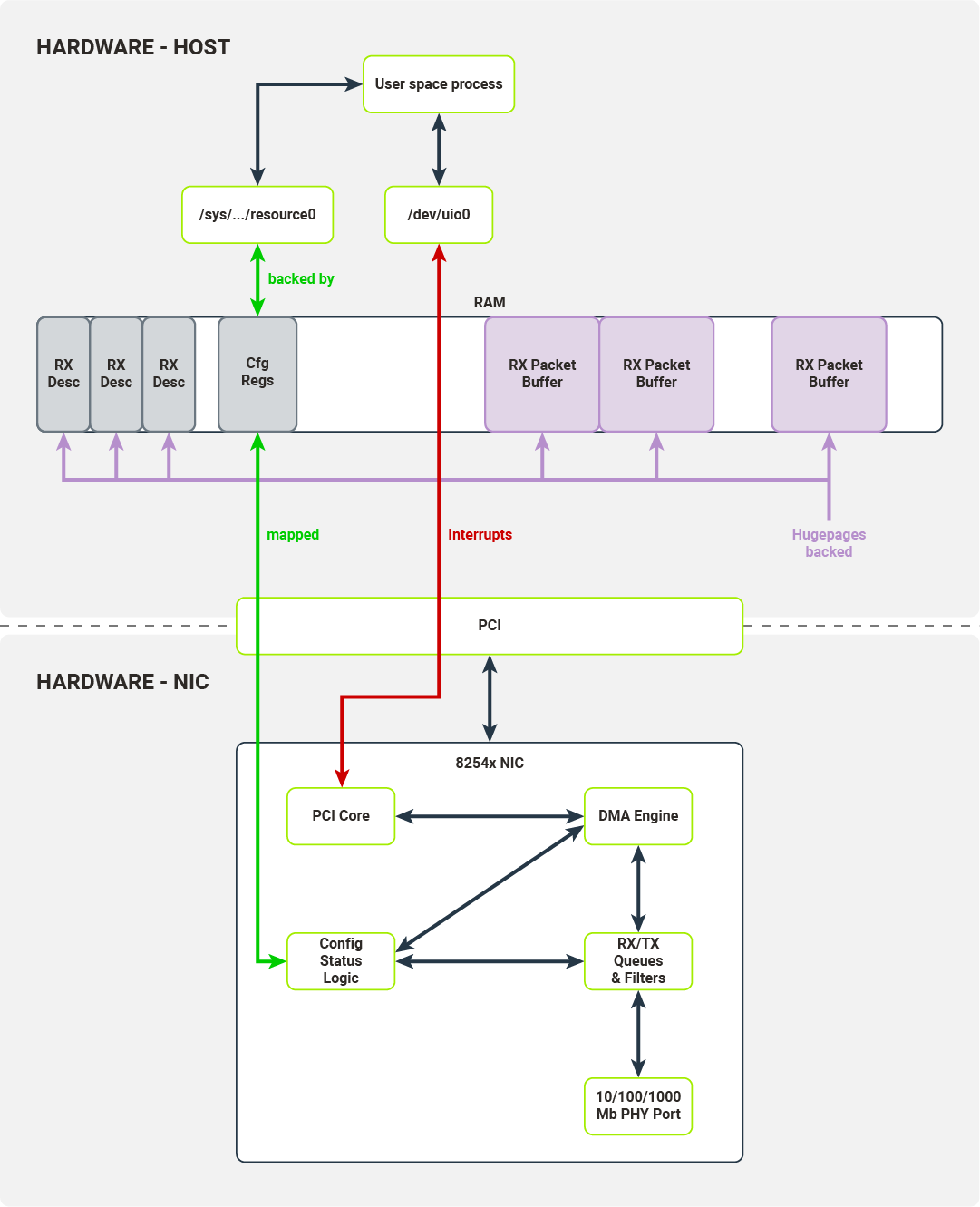

In Figure 2, the hardware architecture of a typical computer system with a network device attached to a PCI bus is presented. We will use the 8254x NICs family, more specifically, their single-port variants, as in the example.

The most important components of the NIC are:

- 10/100/1000 Mb PHY Port

- RX/TX Queues & Filters

- Config/Status/Logic Registers

- PCI Core

- DMA Engine

10/100/1000 Mb PHY Port

The hardware component responsible for encoding/decoding packets on the wire.

RX/TX Queues & Filters

Typically implemented as hardware FIFOs (First-In First-Out queues), RX/TX Queues directly connect to PHY ports (Physical Ethernet Ports). Frames decoded by the PHY port are pushed onto the RX queue (a single queue on 8254x NICs) and later consumed by the DMA Engine (Direct Memory Access Engine). Frames ready to be transmitted are pushed onto TX queues and later consumed by the PHY Port. Before being pushed onto RX queues, the received frames are processed by a set of configurable filters (e.g., Destination MAC filter, VLAN filter, Multicast filter). Once a frame passes the filter test, it is pushed onto the RX queue with appropriate metadata attached (e.g., VLAN tag).

Config/Status/Logic

The Config/Status/Logic module consists of the hardware components that configure and control the NIC’s behavior. The driver can interact with this module through a set of configuration registers mapped to physical RAM. Writes and reads from certain locations in physical RAM will be propagated to the NIC. The Config/Status/Logic module controls the behaviour of RX/TX queues and filters, and collects traffic statistics from them. It also instructs the DMA Engine to perform DMA transactions (e.g., transfer data packets from the RX queue to the host’s memory).

PCI Core

The PCI Core module provides an interface between the NIC and the host over the PCI bus. It handles all the necessary bus communications, memory mappings, and emits interrupts received by the host’s CPU.

DMA Engine

The DMA Engine module handles and orchestrates DMA transactions from the NIC’s memory (packet queues) to the host’s memory over the PCI bus.

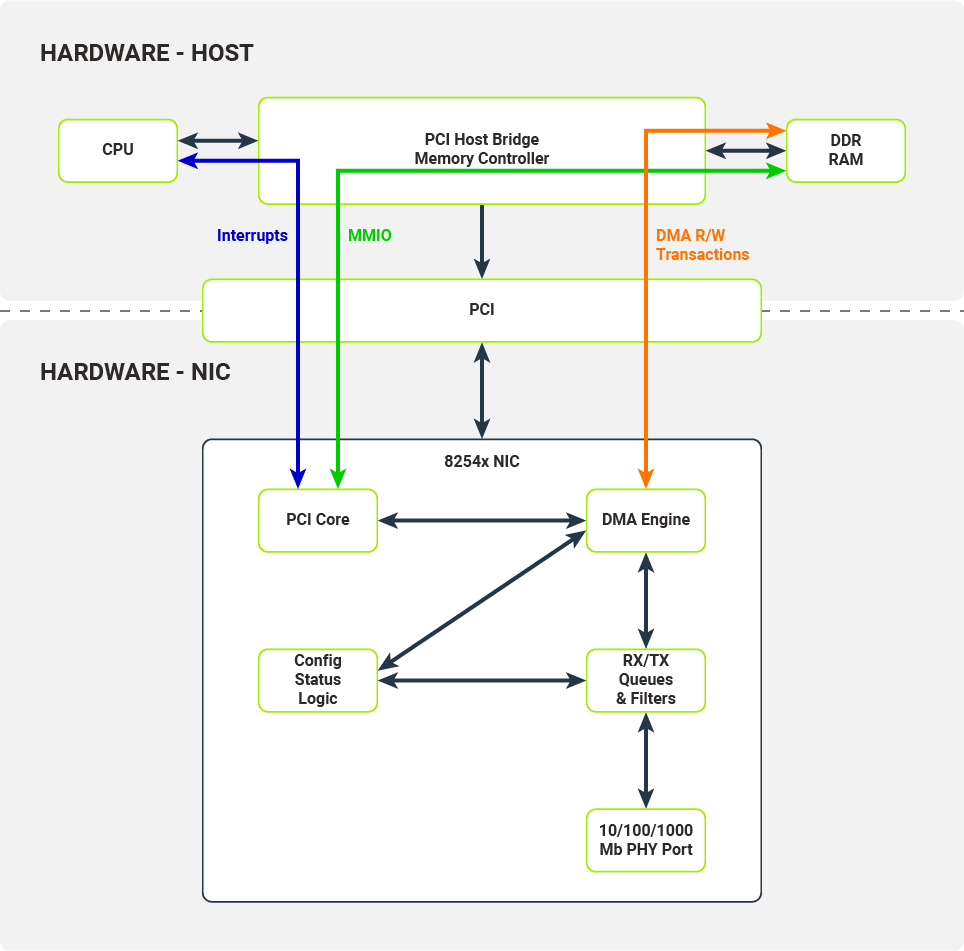

The Host and the NIC communicate across several interfaces over the PCI bus. These interfaces are utilized by kernel drivers to configure the NIC and receive/transmit data from/to packet queues.

MMIO (Memory-Mapped Input-Output)

Configuration registers are memory-mapped to the host’s memory. This mechanism allows the kernel driver to control NIC behavior without direct I/O port access.

Interrupts

NIC fires interrupts on specific events, e.g., link status change or packet reception.

DMA R/W Transactions (DMA Read/Write Transactions)

Packet data is copied to the host's memory from the NIC packet queue (when received) or copied to the NIC packet queue from the host’s memory (when transmitted). These data transfers are carried out without the host’s CPU intervening and are controlled by the DMA Engine.

NIC-to-Kernel Data Flow: RX/TX Descriptor Queues and DMA Transactions

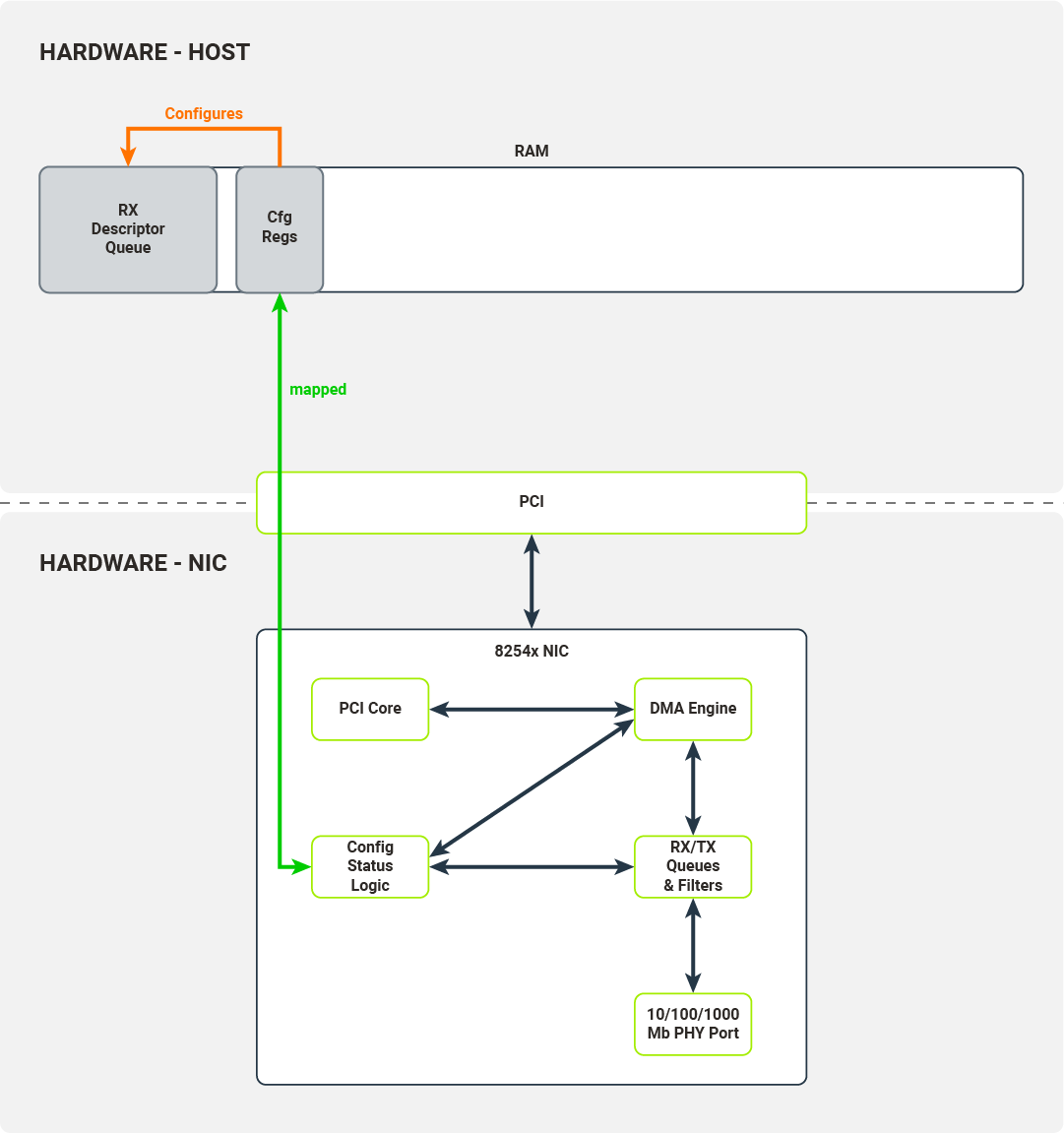

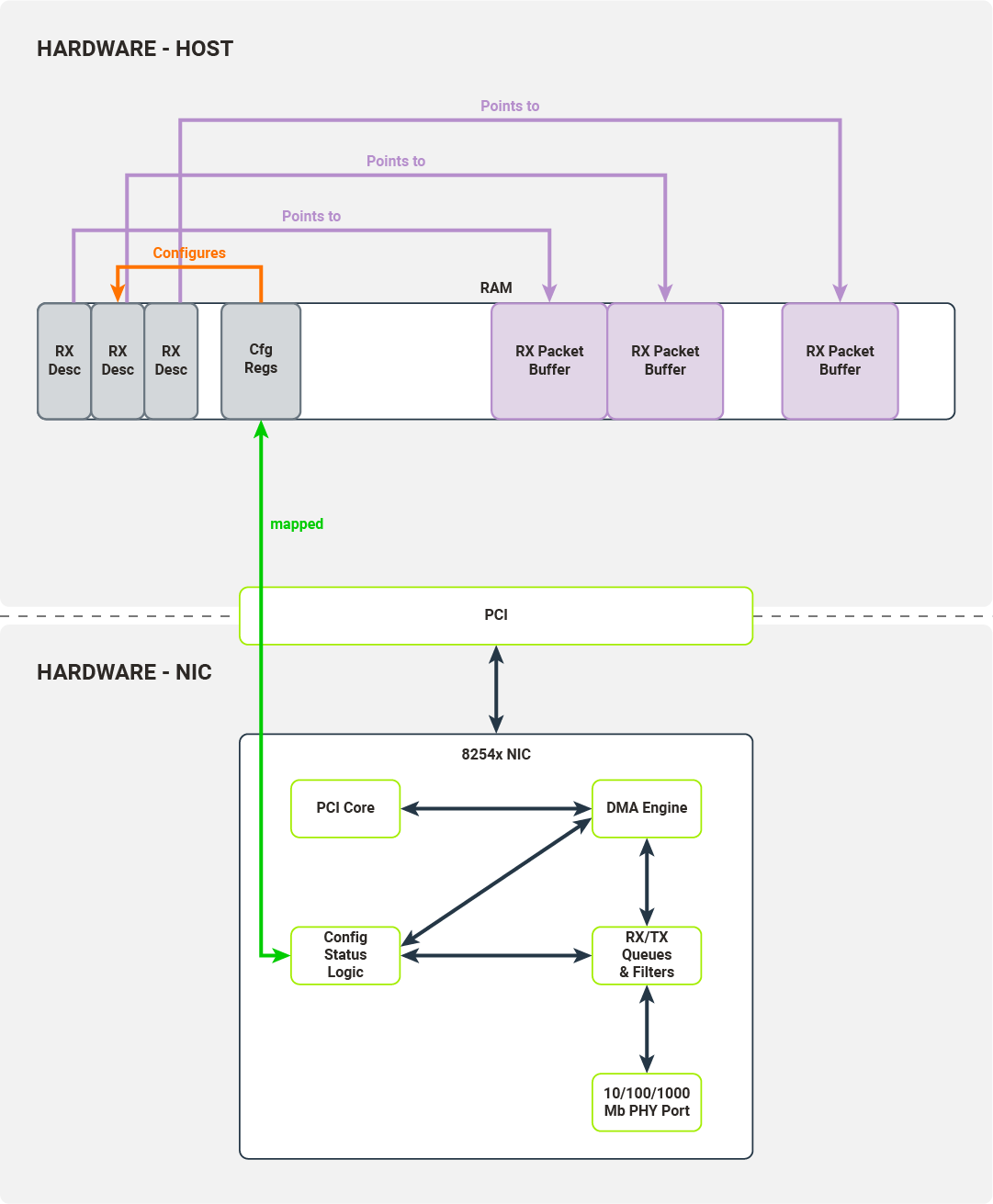

To better understand the data flow between the NIC and the kernel, we now turn to the RX data flow, a critical path for understanding how kernel drivers achieve low-latency packet reception. We will go step by step and discuss the data structures required by the NIC and kernel driver in order to receive packets.

After the NIC has been registered by the kernel PCI subsystem, the driver enables MMIO for this device by mapping IO memory regions to the kernel address space. The driver will use this memory region to access the NIC’s configuration registers and control its function. The driver can, for example:

- check link status

- mask/unmask interrupts

- override Ethernet auto-negotiation options

- read/write Flash/EEPROM memory on NIC

When a driver wants to configure packet reception, it accesses the following set of configuration registers:

- Receive Control Register - enables/disables packet reception, configures RX filters, sets the RX buffer size;

- Receive Descriptor Base Address - the base address of the receive descriptor buffer;

- Receive Descriptor Length - the maximum size of the receive descriptor buffer;

- Receive Descriptor Head/Tail - offsets from the beginning of the receive descriptor buffer they point to head/tail of the receive descriptor queue;

- Interrupt Mask Set/Read - unmask/mask (enable/disable) packet reception interrupts.

Most of the configuration registers are used to set up a receive descriptor buffer.

The received descriptor buffer is a contiguous array of packet descriptors. Each descriptor describes the physical memory locations where the packet data will be transferred by the NIC and contains status fields determining which filters the packets have passed. The kernel driver is responsible for allocating the packet buffers, which can be written to by the NIC’s DMA engine. After successful allocation, the driver puts these buffers’ physical addresses into packet descriptors.

The driver writes the packet descriptor buffer’s base address into the configuration register, initializes the receive descriptor queue (by initializing head/tail registers), and enables packet reception.

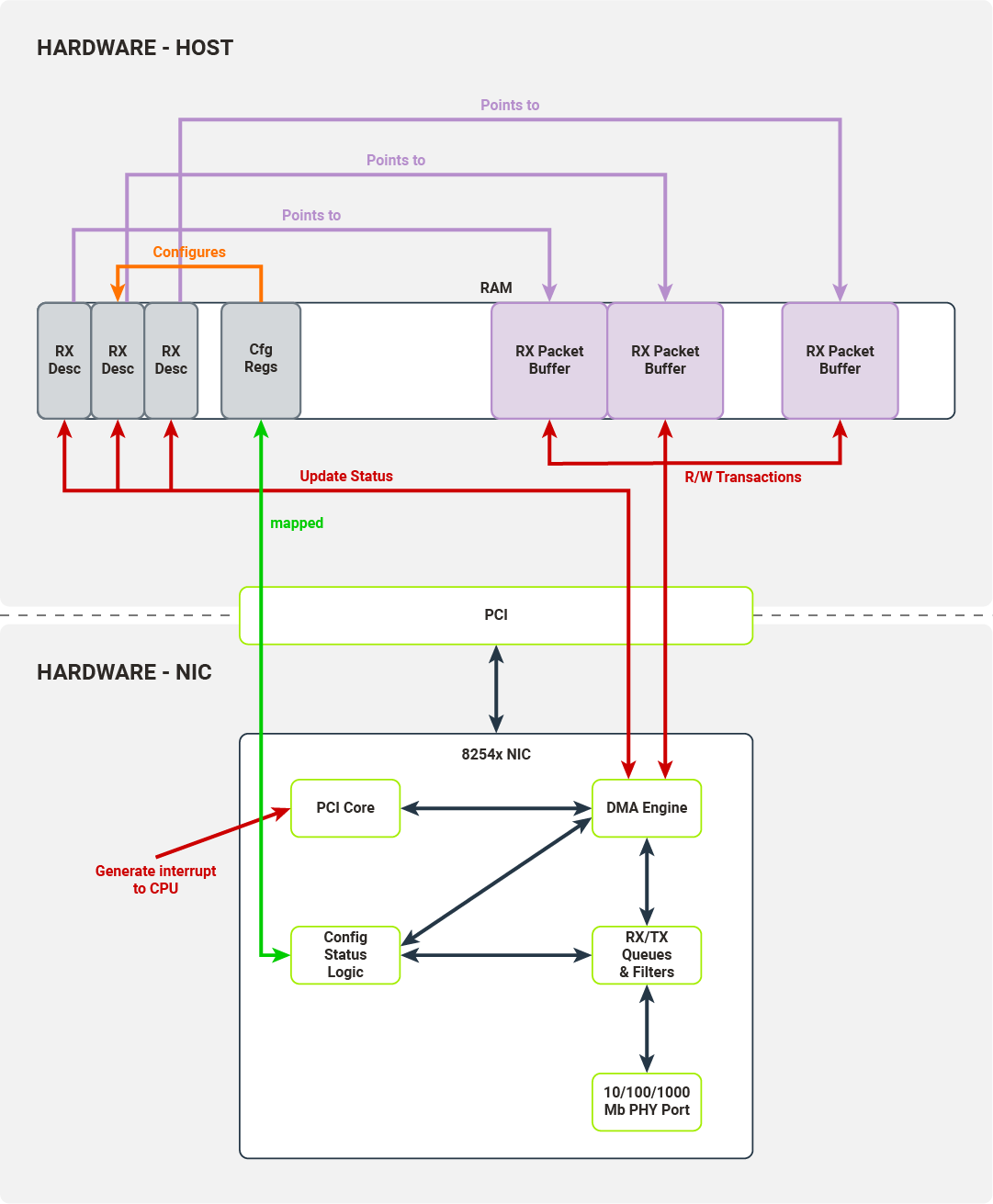

Once the PHY Port has received a packet, it pushes it onto the NIC’s RX queue and traverses a configured filter. Packets ready to be transferred to hosts are transferred by the DMA engine to the hosts’ memory. The destination memory location is taken from the tail of the descriptor queue.

The DMA Engine updates the status fields in the tail of the descriptor queue and, after the operation is finished, the NIC increments the tail. Finally, the PCI Core generates an interrupt to the host, signaling that another packet is ready to be retrieved by the driver.

A symmetrical process is carried out for the packet transmission path, completing the full kernel-space packet processing cycle.

The kernel driver puts packet data in the packet buffer indicated by the memory location field in the descriptor under the tail pointer. The kernel driver marks the packet as ready to transmit and increments the tail pointer.

When the head and tail pointers in the descriptor queue diverge, the NIC inspects a packet descriptor under the head pointer and initiates a DMA transaction to transfer the packet data from the host memory to the TX packet queue. When the transaction is complete, the NIC increments the head pointer.

DPDK User Space Drivers: Bypassing the Kernel with UIO and Hugepages

We have covered how kernel space drivers communicate with hardware. DPDK, however, is a user-space program, and the user space cannot directly connect with hardware, a fundamental constraint that kernel bypass frameworks must solve. This presents us with a few problems:

- How to access configuration registers;

- How to retrieve physical memory addresses of the memory allocated;

- How to prevent process memory from swapping in/out or moving during runtime;

- How to receive interrupts.

In this section, we will discuss the mechanisms the Linux kernel provides to overcome these obstacles.

Why Move NIC Drivers to User Space? Performance, Flexibility, and Development Speed

There are three primary reasons to move the drivers from the kernel to the user space:

- Reduce the number of context switches required to process packet data - each syscall causes a context switch, which takes up time and resources.

- Reduce the amount of software on the stack - Linux kernel provides abstractions-- general-purpose ones, in some sense--that can be used by any process; having a networking stack implemented only for specific use cases allows us to remove unnecessary abstractions, thus simplifying the solution and possibly improving performance.

- User space drivers are easier to develop than kernel drivers - if you want to develop a kernel driver for a very specific device, it might be hard to support. In the case of Linux, it would be beneficial to have it merged into a mainline kernel, which takes considerable time and effort. Moreover, you are bound by Linux’s release schedule. Finally, bugs in your driver may cause the kernel to crash.

UIO

Recall, for a moment, the Linux networking stack (Figure 1). Interfaces used to communicate directly with the hardware are exposed by the kernel’s PCI subsystem and its memory management subsystem (mapping IO memory regions). In order for our user space driver to have direct access to the device, these interfaces must somehow be exposed. Linux can expose them by utilizing the UIO subsystem.

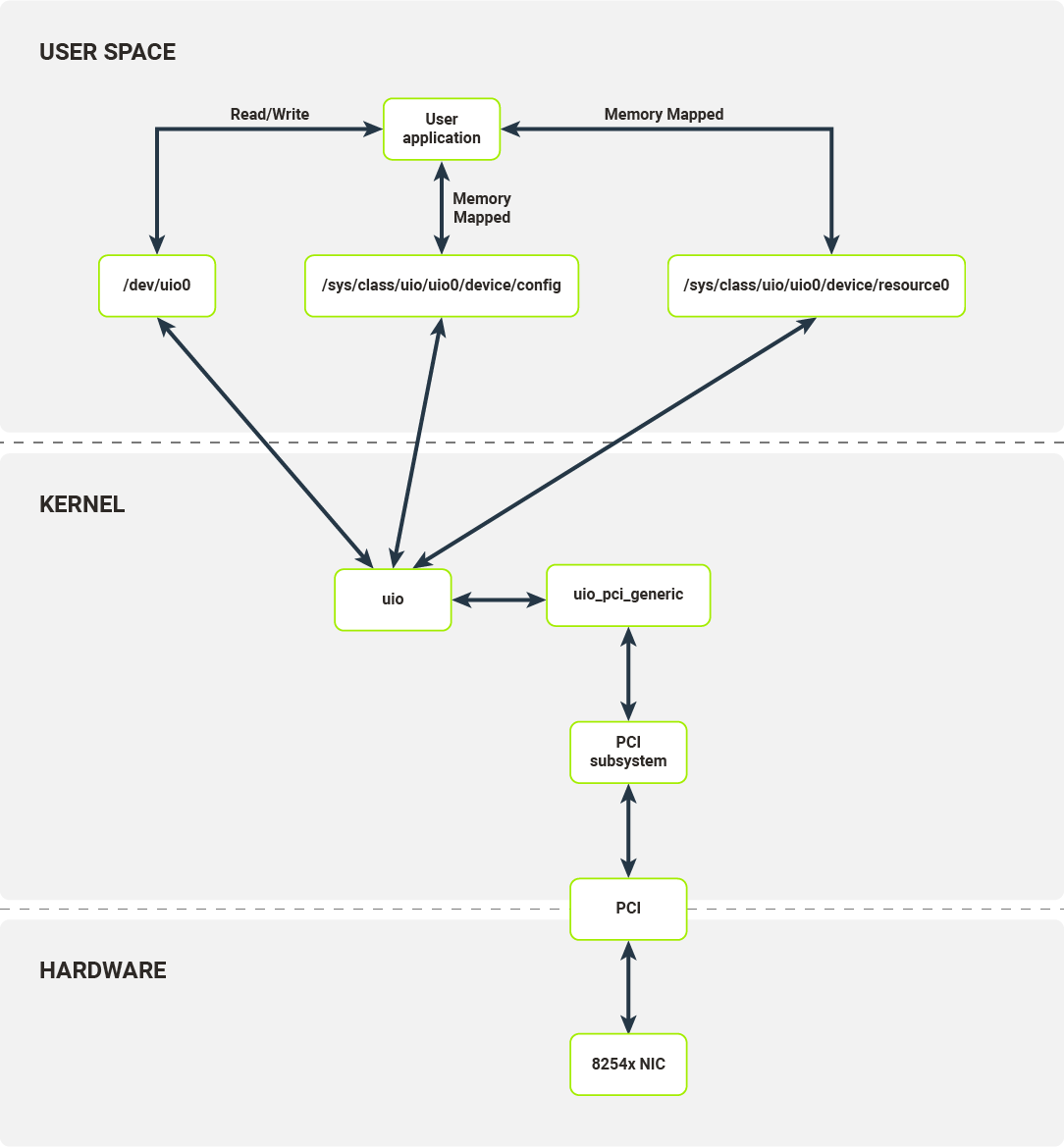

UIO (User space Input/Output) is a separate kernel module responsible for setting up user space abstractions, usable by user processes, to communicate with hardware, enabling kernel bypass without sacrificing hardware control. This module sets up internal kernel interfaces for device passthrough to user space. To use UIO for a PCI device, you’ll need to bind your NIC to the uio_pci_generic driver (this process requires unbinding the device’s dedicated driver, e.g., the e1000, and manually binding the uio_pci_generic driver). This driver is a PCI driver that works with the UIO module to expose the device’s PCI interfaces to the userspace. The interfaces exposed by the UIO are presented in Figure 8.

For each bound device, the UIO module exposes a set of files with which user space applications can interact. In the case of the 8254x NIC, it exposes:

- /dev/uio0 - reads from this file allow the user space program to receive interrupts; read blocks until interrupt has been received; read() calls return the number of interrupts;

- /sys/class/uio/uio0/device/config - user space can read or mmap this file; it is directly mapped to PCI device configuration space where e.g. the device and vendor ID are stored;

- /sys/class/uio/uio0/device/resource0 - user space can mmap this file; it allows a user space program to access BAR0, first IO memory region of the PCI device, thus a user space program can read from/write to PCI device configuration registers.

It is worth noting that UIO is not the only mechanism available for PCI device passthrough to user space. VFIO (Virtual Function I/O) is a more modern alternative that provides the same hardware access capabilities with the addition of IOMMU protection. The remainder of this article focuses on UIO as the foundational mechanism, but the distinction is worth keeping in mind.

In some sense UIO module only provides a set of memory translations allowing the user space process to configure the device and receive notifications on device events.

To properly implement a packet processing pipeline, we have to define an RX/TX descriptors buffer. Each descriptor buffer has a pointer (memory address), which points to preallocated packet buffers. The NIC only accepts the physical addresses as valid memory locations to run DMA transactions on, but processes have their own virtual address space. It is possible to find a physical address corresponding to each virtual address (using /proc/self/pagemap interface [pagemap documentation link-icon ]), but this correspondence cannot be used consistently. There are a few reasons for this:

- The process can be swapped out/in and change its location:

- Sidenote: This can be averted - mlock().

- The process can be moved to another NUMA node and the kernel might move its memory:

- The Linux kernel does not guarantee that physical pages will stay in the same place during the process runtime.

This is where the hugepage mechanism becomes important.

Hugepages

On x86 architecture (both the 32- and 64-bit variants), standard physical pages are 4KB in size. Hugepages were introduced into the architecture to solve two problems, both directly relevant to high-performance packet processing pipelines.:

- First, to reduce the number of page table entries required to represent contiguous chunks of memory bigger than 4KB. This reduces TLB usage, thus reducing TLB thrashing; (The TLB - Translation Lookaside Buffer - is in-CPU cache for page table entries);

- Second, to reduce the overall size of the page table.

On x86 architecture, depending on the hardware support, hugepages can be 2MB or 1GB in size. The Linux kernel memory management subsystem allows the administrator to reserve a hugepage pool that is usable by user-space processes. By default, when the kernel is configured to preallocate the hugepages, the user space process can create a file in the /dev/hugepages directory. This directory is backed by a pseudo-filesystem, hugetlbfs. Each file created in this directory will be backed by hugepages from the preallocated pool. The user space process can create files of the size called for and then map them into its address space. The user space process can then extract the physical address of this memory region from /proc/self/pagemap and use it for packet buffers in the descriptor queue.

It is safe to use hugepages as memory backing the descriptor queue and packet buffers, because Linux kernel guarantees that the physical location will not change during runtime.

Figure 9 illustrates an RX packet queue with changes showing which interfaces are consumed by the user space process.

Frequently Asked Questions

What is the difference between DPDK and standard Linux networking?

Standard Linux networking routes packets through the kernel's TCP/IP stack, which involves multiple system calls and context switches per packet. Without DPDK, each time a NIC receives incoming packets, a kernel interrupt fires to process them, followed by a context switch from kernel space to user space, adding measurable delay. DPDK eliminates this by running poll mode drivers entirely in user space, making it the preferred choice for high-throughput use cases like NFV, 5G infrastructure, and packet brokers.

Why does DPDK require hugepages?

There are two reasons. By using hugepage allocations, performance is increased since fewer pages are needed, and therefore fewer Translation Lookaside Buffers are required, reducing the time it takes to translate a virtual page address to a physical page address. Without hugepages, high TLB miss rates would occur with the standard 4KB page size.

The second reason is memory stability: DPDK allocates hugepages because the Linux kernel treats them differently from regular 4KB pages — specifically, the operating system will not change their physical location during runtime, which is essential for the NIC's DMA engine to safely perform direct memory access.

What is UIO and why does DPDK use it?

UIO (Userspace I/O) is a Linux kernel module that exposes PCI device interfaces (configuration space, memory-mapped I/O, and interrupt notification) to user space processes via a controlled set of file descriptors. DPDK uses it to read and write NIC configuration registers and receive interrupt signals without a dedicated kernel driver. It is recommended that vfio-pci be used as the kernel module for DPDK-bound ports in all cases; UIO-based modules like uio_pci_generic remain available but lack IOMMU protection and are inherently less secure.

Does DPDK completely eliminate kernel involvement?

Not entirely. The kernel still handles initial setup, UIO or VFIO module loading, hugepage allocation, and PCI device binding, all require kernel support. What DPDK eliminates is the kernel's involvement in the data path itself: data goes straight from the NIC driver to the user space application, bypassing the kernel networking stack for every packet processed at runtime.

What hardware does DPDK support?

Originally developed by Intel to run on x86-based CPUs, DPDK now supports other CPU architectures, including IBM POWER and ARM. NIC support is handled through a Poll Mode Driver (PMD) architecture, with drivers available for hardware from Intel, Mellanox/NVIDIA, Broadcom, Marvell, and others. The 8254x NIC family used as the example throughout this article represents one of the foundational reference implementations.

Summary: DPDK Hardware Access from User Space

In this article, we have discussed:

- The standard packet processing pipeline in kernel drivers;

- Why user space processes cannot directly access hardware;

- Interfaces exposed by Linux kernel to user space which allow users to create user space drivers.

If you’d like to go deeper on low-level network programming, these articles build directly on what’s covered here:

- Rust vs C: safety and performance in low-level network programming - explores whether Rust can replace C for DPDK development. The CodiLime team built Rust bindings for DPDK, re-implemented a standard l2fwd application, and ran head-to-head performance benchmarks, making it a direct practical extension of the user space driver concepts introduced here.

- FD.io’s VPP’s mechanical sympathy – thread and memory management techniques explained - VPP is a high-performance packet processing stack that uses DPDK as its network I/O layer. This article goes under the hood of how VPP distributes packet processing across CPU cores, manages NUMA-aware memory, and integrates with DPDK's mempool system, all without copying packet data between the two frameworks.

- How memory types affect DPDK application performance — case study - a real debugging investigation into what happens when you swap hugepage-backed memory for GPU memory in a DPDK application. It covers mbuf pools, IOMMU, DMA mapping, and VFIO in practice, picking up directly where the hugepages and UIO sections of this article leave off.

And if you're building or optimizing a high-performance packet processing pipeline, CodiLime's network engineering team works with these technologies at the system level - Network Infrastructure Design | CodiLime