The topic of "Day 0/Day 1/Day 2" in the context of the software lifecycle is very prominent in the professional IT media today. Presentations and conference talks are often focused on making software development processes effective and easy to manage.To do so, it is necessary to clearly define the notions that are used. The basic terms "Day 0/Day 1/Day 2" are often understood intuitively, which can introduce some ambiguity when talking about the software life cycle. For that reason, I have decided to define them more accurately to show the entire process of software development and how they are used in real projects. This short blog post provides a definition of "Days" understood as stages of the software lifecycle. It also describes how cloud has changed the traditional way of thinking about software development and maintenance processes. According to the "State of Cloud Adoption in 2021 " report, cloud-based development and deployment was a top priority in 2021 for 83% of both prominent companies and mid-market organizations. More cloud statistics? More than half (52%) of applications developed by mid-sized and large companies are cloud-based. The expectation is that figure will increase by a further 30% by 2023, as increasing numbers of applications migrate to the cloud.

So, without further ado, let’s explore just how ‘days’ and cloud come together.

What "days" are all about

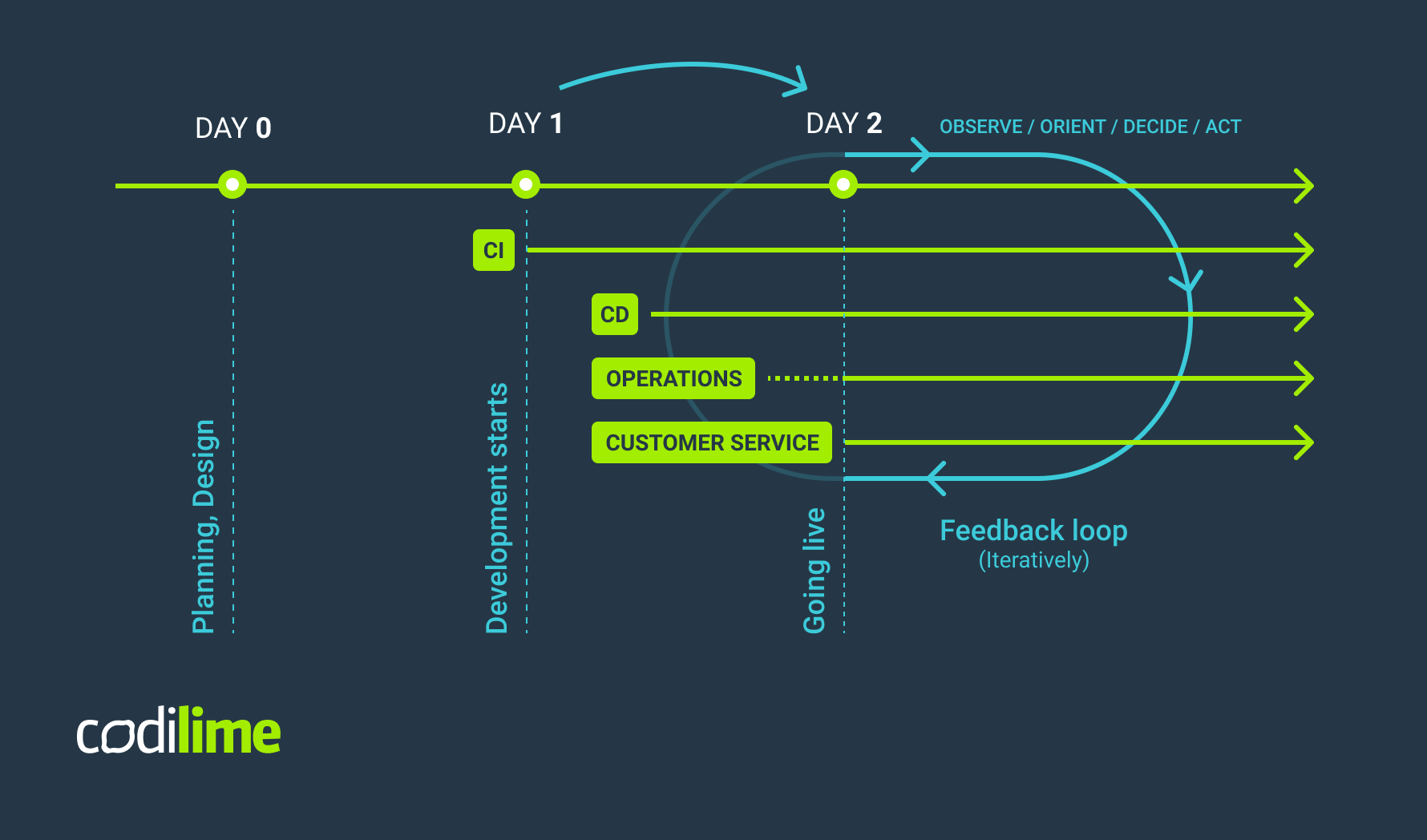

In IT, the terms Day 0/Day 1/Day 2 refer to different phases of the software life cycle. In military parlance, Day 0 is the very first day of training, when recruits enter their formative stage. In software development, it represents the design phase, during which project requirements are specified, requirements engineering is conducted, and the architecture of the solution is decided.

Day 1 involves developing and deploying software that was designed in the Day 0 phase. In this phase we create not only the application itself, but also its infrastructure, network, external services and implement the initial configuration of it all.

Day 2 is the time when the product is shipped or made available to the customer. Here, most of the effort is focused on maintaining, monitoring and optimizing the system. Analyzing the behavior of the system and reacting correctly are of crucial importance, as the resulting feedback loop is applied until the end of the application’s life.

In the pre-cloud days, these phases were handled separately, with no overlap between them. Today, that’s no longer the case. The agile software development approach now is part of teams’ day-to-day work. Thanks to this, the design and development stages (day 0 and day 1) are working together more organically. More and more often even day 2 is getting tied to the loop.

Let’s have a look at how all of this applies to the lifecycle of modern applications.

Day 0 - boring, but essential

Day 0 is often overlooked because it can be boring, but that doesn’t diminish its importance. A successful software product is the result of a thorough planning and design process. It is necessary to carefully plan the architecture of a system or app and the resources needed (CPUs, storage space, RAM) to get it up and running. Secondly, you should define measurable milestones leading to the realization of a project goal. Each milestone should have a precise date. This helps measure the progress of the project and determine if you are running late with the schedule. All project time estimates should be based on the probability and not be merely best-case scenarios. When planning, it is good practice to add buffers, as unexpected events can throw sand in the gears of even the most carefully elaborated plan. The testing phase plays an important role too, and should be included in the initial project planning. These are the basic requirements, and they are as important in the “cloud era” as they have ever been.

Still, the cloud has changed two things in the Day 0 planning of computing resources. Thanks to the cloud getting different or new resources (CPUs, storage space, RAM) at any point of the project, it is easier than it was with on-prem infrastructure. Therefore some mistakes made at the planning stage can be forgiven. On the other hand, commitment to a specific cloud vendor already in the planning phase may later result in vendor locking. This can cost you money and take time to change, so a cloud vendor should be chosen wisely.

Secondly, as we are currently observing in the shift to the cloud, the effort related to the operations remains the same, but it is no longer focused on infrastructure. Now it is software that is driving automation and value.

Day 1 - where things get creative

For developers and project owners, Day 1 is definitely the most interesting phase. The initial design is brought to life and an infrastructure is created based on the project’s specifications. In order to become truly cloud-native, best practices must be observed. Guidelines such as Twelve-Factor Apps methodology can be followed. What’s more, using cloud means that you should stick to an important practice of the DevOps lifecycle phases: Continuous Integration/Continuous Delivery.

The cloud has brought us an important change from the old-school approach to software development: an application starts to live as soon as the first lines of code are pieced together to make your proof-of-concept. You can start with Continuous Integration practices to test your application’s sanity, but you will quickly venture towards Continuous Delivery. When developing the app, we start introducing some operational elements, especially when multiple environments are used. Taking care with these operational elements will make the maintenance team’s life easier during the maintenance phase (Day 2).

There are several classes of tools that can be used during “Day 1”. They can be grouped by the problems they solve. “Infrastructure-as-a-code” should be mentioned at the top of the list. IaaC manages the operations environment just as the applications or code are. This approach allows DevOps best practices, including version control, virtualized tests, and continuous monitoring to be applied to the code driving the infrastructure. Here too there are plenty of tools at our disposal, such as Terraform, Ansible or Puppet, to name a few. The second class of tools is related to the container orchestration systems that automate the deploying and managing of containers. Kubernetes and its implementations, such as Google Kubernetes Engine and Amazon’s Elastic Kubernetes Service, are essential.

There are a variety of tools to make CI/CD processes more efficient and easier to manage. You can choose from cloud-native tools, such as:

- Jenkins X

- this refreshed version of Jenkins is an open source automation server that helps with automated tasks connected with software building, testing, and deployment.

- Tekton

- an open source framework for creating CI/CD systems. As a Kubernetes-native solution, Tekton enables you to build, test and deploy your software beyond various cloud providers’/on-premises systems.

Or, if you’re looking for a cloud-based solution, you can consider Microsoft Azure Pipelines (you can use it in any cloud environment) or AWS CodePipeline, which is easily integrated not only with Amazon solutions but also with tools like GitHub or TeamCity.

>> Read more to unlock the potential of CI/CD for efficient software delivery:

- What is CI/CD - all you need to know

- Accelerating delivery: why CI/CD is important for your company

- The best CI/CD tools and how to choose the right one

Day 2 - daily operations routine

Once the software is ready, it goes live and customers start using it. Day 2 begins here, and elements including software maintenance and customer support are introduced. The software itself is going to evolve in order to adapt to the changing needs and customer requirements. During Day 2, the main focus is on establishing a feedback loop. We monitor how the app is working, gather feedback from users and send it to the development team, which will implement it in the product and release a new version. The military terminology Observe-Orient-Decide-Act aptly captures what occurs in this phase.

- Observe - obtain information from monitoring systems (resources usage and metrics, application performance monitoring).

- Orient - perform root cause analysis of the problem.

- Decide - find a way to solve the issues arising.

- Act - implement the solutions.

As during the combat, this cycle is continuously repeated.

Monitoring procedures are based on the requirements defined in the Service Level Agreement (SLA). The SLA is based on Service Level Objectives (SLO), which represent the state of our Service Level Indicators (SLI). Automation and monitoring are the key to tackling the Day 2 responsibilities.

Several classes of tools help get the Day 2 job done. There are automation and orchestration tools such as Ansible or Kubernetes, which help manage application environments.

Application performance monitoring (APM) class groups software that helps IT admins monitor app performance and thus provide a high-quality user experience. Among many solutions on the market, we can name:

- Datadog

- enables a correlation between application performance and underlying infrastructure metrics in one unified platform. Datadog helps optimize the application and make code more efficient by analyzing and isolating dependencies, removing bottlenecks, reducing latency, tracking errors, and increasing code efficiency.

- Dynatrace

- a tool used to monitor and manage the performance of the software application. What is worth mentioning is Dynatrace's proactive approach. It decreases the time to resolve a problem and saves resources to identify and fix the issue.

- Zenoss

- monitoring and analytics software available in three versions: open source, commercial, and ZaaS (Zenoss as a Service). It automatically monitors application events, provides instant signals and alerts, and easily integrates with other solutions, such as Dynatrace.

Finally, ticketing systems such as Servicedesk or Zendesk handle customer service, enabling users to report problems and issues related to the apps they are running.

Cloud as a game changer

In the pre-cloud era, the separation between these phases was distinctly visible, but today, with cloud our everyday reality, things are constantly changing. Using the cloud and modern software development practices makes it much easier to handle the ever-changing requirements in the software development lifecycle. Continuous Integration/Continuous Development methodology enables us to dynamically respond to customer feedback and improve apps in real time without the need to wait for a major release to introduce improvements.

One of DevOps benefits in cloud-based and cloud-native software architecture is achieving shift left, which means that the steps that traditionally have been left until the very end can now be performed much sooner. Among other things, this has led Day 1 and Day 2 to now overlap one another. Moreover, the biggest pain point of traditional software development was the transition from Day 1 to Day 2 - the handover from developers to operators. Now, DevOps shows how to handle this problem: initiate the processes early and keep them running until the end of the application’s life. Last but not least, toolchain is becoming complete, making it possible both to perform Day 1 tasks and to adapt to Day 2 changes. The tools used can be modeled to our needs and everyone involved in the process can use the same set of tools.

How else can implementing the Day 0/Day 1/Day 2 approach benefit your business? To achieve this aim, day 2 is the phase on which you should focus the most. Why? Because day 2 provides you with the outcome. Here, you could look for room for improvements and increases in efficiency to enable your engineers to better address the new/updated business requirements. Automating system maintenance tasks might be one of the options to achieve that. CI/CD implementation is a solution that can help with better automation and benefit your business.